Autonomous cars with learner plates

Add bookmarkIn June Google’s self-driving cars broke through the magical million mile mark. Over this distance these vehicles were involved in eleven accidents; eight during city driving and three on the freeway. None of these were the fault of the Google cars: The majority was as a result of rear-ending, although the cars have also been side-swiped and hit by a car rolling through a stop sign.

Whilst these vehicles typically deal with uncertain situations by simply stopping’ this is not acceptable if the technology is to be rolled out successfully. For the technology to be effective self-driving cars will have to interpret and make split-second decisions based on prevailing conditions and perceived threats.

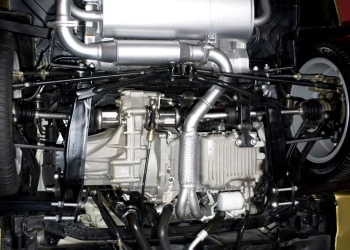

Many elements must come together for this to work: Already in 2007 Google's Boss, which won the DARPA Urban Challenge, used 17 sensors, including nine lasers, four radar systems and a Global Positioning System device to navigate the obstacle course. This provided a reliable software architecture made up of a perception component, mission-planning component and behavioral executive, which according to Chris Urmson, director of Google's self-driving car program, are required for autonomous cars to function correctly.

Cars laden with laser sensors can create 3-D representations of the world and use those representations to navigate safely through chaotic, unpredictable traffic. However, many of the best-performing algorithms share a common trait: They have been explicitly programmed by humans.

Self-learning artificial intelligence raises the game

Artificial intelligence (AI) is the intelligence exhibited by machines or software, and is defined as "the study and design of intelligent agents", where an intelligent agent is a system that perceives its environment and takes actions that maximize its chances of success. John McCarthy, who coined the term in 1955, defines it as "the science and engineering of making intelligent machines".

Image Credit: GenyMobile

In the case of Google’s self-driving car the computing power is generated by three multi-core laptops that use AI to recognize traffic signs, traffic lanes and fingerprints from other road users. The data processed from the 3D radar and GPS coordinates tracks the car on Google Map. Using a common database of infrastructure such as traffic signs and road obstacles the magnetic sensors, acceleration sensors, a supervisory system drives the stepper motor to turn the steering wheel, accelerate and brake the vehicle as required.

Moving toward true AI, deep learning is a set of algorithms in machine learning that attempt to model high-level abstractions in data by using architectures composed of multiple non-linear transformations. Various deep learning architectures such as deep neural networks, convolutional deep neural networks, and deep belief networks have been applied to fields like computer vision, automatic speech recognition, natural language processing, and music/audio signal recognition where they have proven to be impressively responsive and accurate.

These new technologies not only make roadway object-recognition possible but also improve the Human Machine Interface by opening up possibilities in facial and gesture recognition.

There are many machine learning techniques, including Bayesian networks, hidden Markov models, neural networks of various sorts and Boltzmann machines. The differences between them are largely technical. What the techniques have in common is that they consist of a large set of nodes that connect with one another and make interrelated decisions about how to behave.

But this complexity has a downside. For decades, deep networks, though theoretically powerful, didn't work well in practice. Training them was computationally intractable.

But in 2006, Geoffrey Hinton, a computer science professor at the University of Toronto, published a paper widely described as a breakthrough. He devised a way to train deep networks one layer at a time, which allowed them to perform in the real world.

With 95% of driving decisions relying on visual input this is set to be the primary area of focus in any AI. Already many machines have the ability to recognize objects as accurately as humans. According to a paper recently published by a team of Microsoft researchers in Beijing, their computer vision system based on deep CNNs had for the first time eclipsed the ability of people to classify objects defined in the ImageNet 1000 challenge.

Only 5 days after Microsoft announced it had beat the human benchmark of 5.1% errors with a 4.94% error grabbing neural network, Google announced it had one-upped Microsoft by 0.04%.

AI presents major challenges for system on chip suppliers

The bigger and perhaps more pertinent issues for the semiconductor industry are: Will "deep learning" ever migrate into smartphones, wearable devices, or the tiny computer vision SoCs used in highly automated cars? Has anybody come up with SoC architecture optimized for neural networks? If so, what does it look like?

"There is no question that deep learning is a game-changer," said Jeff Bier, a founder of the Embedded Vision Alliance. In computer vision and object recognition, for example, deep learning is very powerful. "The caveat is that it’s still an empirical field. People are trying different things," he said.

Nvidia, for example, is going after deep learning via three products. CEO Jen-Hsun Huang trotted out during his keynote speech at GTC Titan X, Nvidia’s new GeForce gaming GPU which the company describes as "uniquely suited for deep learning. He presented Nvidia’s Digits Deep Learning GPU training system, a software application designed to accelerate the development of high-quality deep neural networks by data scientists and researchers. He also unveiled Digits DevBox, a deskside deep learning appliance, specifically built for the task, powered by four TITAN X GPUs and loaded with DIGITS training system software.

Asked about Nvidia’s plans for its GPU in embedded vision SoCs for Advanced Driver Assistance System (ADAS), Danny Shapiro, senior director of automotive, said Nvidia isn’t pushing GPU as a chip company. "We are offering car OEMs a complete system – both ‘cloud’ and a vehicle computer that can take advantage of neural networks."

A case in point is Nvidia’s DRIVE PX platform -- based on the Tegra X1 processor -- unveiled at the International Consumer Electronics Show earlier this year. The company describes Drive PX as a vehicle computer capable of using machine learning, saying that it will help cars not just sense but "interpret" the world around them.

While current ADAS technology can detect some objects, do basic classification, alert the driver, and in some cases, stop the vehicle. Drive PX goes to the "next level," with Shapiro commenting that Drive PX now has the ability to differentiate "an ambulance from a delivery truck."

By leveraging deep learning, a car equipped with Drive PX, for example, can "get smarter and smarter, every hour and every mile it drives," claimed Shapiro. Learning that takes place on the road feeds back into the data center and the car adds knowledge via periodic software updates, Shapiro said.

Audi is the first company to use the Drive PX in developing its future automotive self-piloting capabilities, while Jaguar Land Rover is in the process of developing what they call "a truly intelligent self-learning vehicle".

Using the latest machine learning and artificial intelligence techniques, their proposed "self-learning car" will offer a comprehensive array of services to the driver. By being able to recognise who is in the car, it will be able to learn their preferences and driving style.

Dr. Wolfgang Epple, Director of Research and Technology for Jaguar Land Rover, explains: "Up until now most self-learning car research has only focused on traffic or navigation prediction. We want to take this a significant step further and our new learning algorithm means information learnt about you will deliver a completely personalised driving experience and enhance driving pleasure."

Can AI make Ethical decisions?

As the technology advances, however, and cars become capable of interpreting more complex scenes, automated driving systems may need to make split-second decisions that raise real ethical questions.

At a recent industry event, Chris Gerdes, a professor at Stanford University, gave an example of one such scenario: a child suddenly dashing into the road, forcing the self-driving car to choose between hitting the child or swerving into an oncoming truck.

"As we see this with human eyes, one of these obstacles has a lot more value than the other," Gerdes said. "What is the car’s responsibility?"

Gerdes and Patrick Lin, a professor of philosophy at Cal Poly, organized a workshop at Stanford earlier this year that brought together philosophers and engineers to discuss the issue. They programed different ethical settings into typical software that controls automated vehicles, and then tested the code in simulations and even in real vehicles. Such settings might, for example, tell a car to prioritize avoiding humans over avoiding parked vehicles, or not to swerve for squirrels.

"When you ask a car to make a decision, you have an ethical dilemma," says Adriano Alessandrini, a researcher working on automated vehicles at the University de Roma La Sapienza, in Italy. "You might see something in your path, and you decide to change lanes, and as you do, something else is in that lane. So this is an ethical dilemma."

And whilst this technology is very exciting industry luminary, Elon Musk, cautions that humanity may become the architect of its own demise. Speaking at an event in San Francisco he said: "I hope we’re not just the biological boot loader for digital superintelligence. Unfortunately, that is increasingly probable."